Practical AI Privacy: A 6-week online Maven masterclass

Posted on Mo 30 März 2026 in classes

AI usage (both intended and not) is increasing in our products, software and lives. For some, this is a welcome way to automate tedious tasks; for others, an intrusion that doesn't seem to end. For everyone, AI changes how you might think about and evaluate data privacy.

At workplaces, there's often top-down and bottoms-up incentivization to automate and manage your work with AI. Increasingly, these tasks touch sensitive data, documents and workflows. How can you automate these workflows safely? What are the best practices with regard to privacy and security?

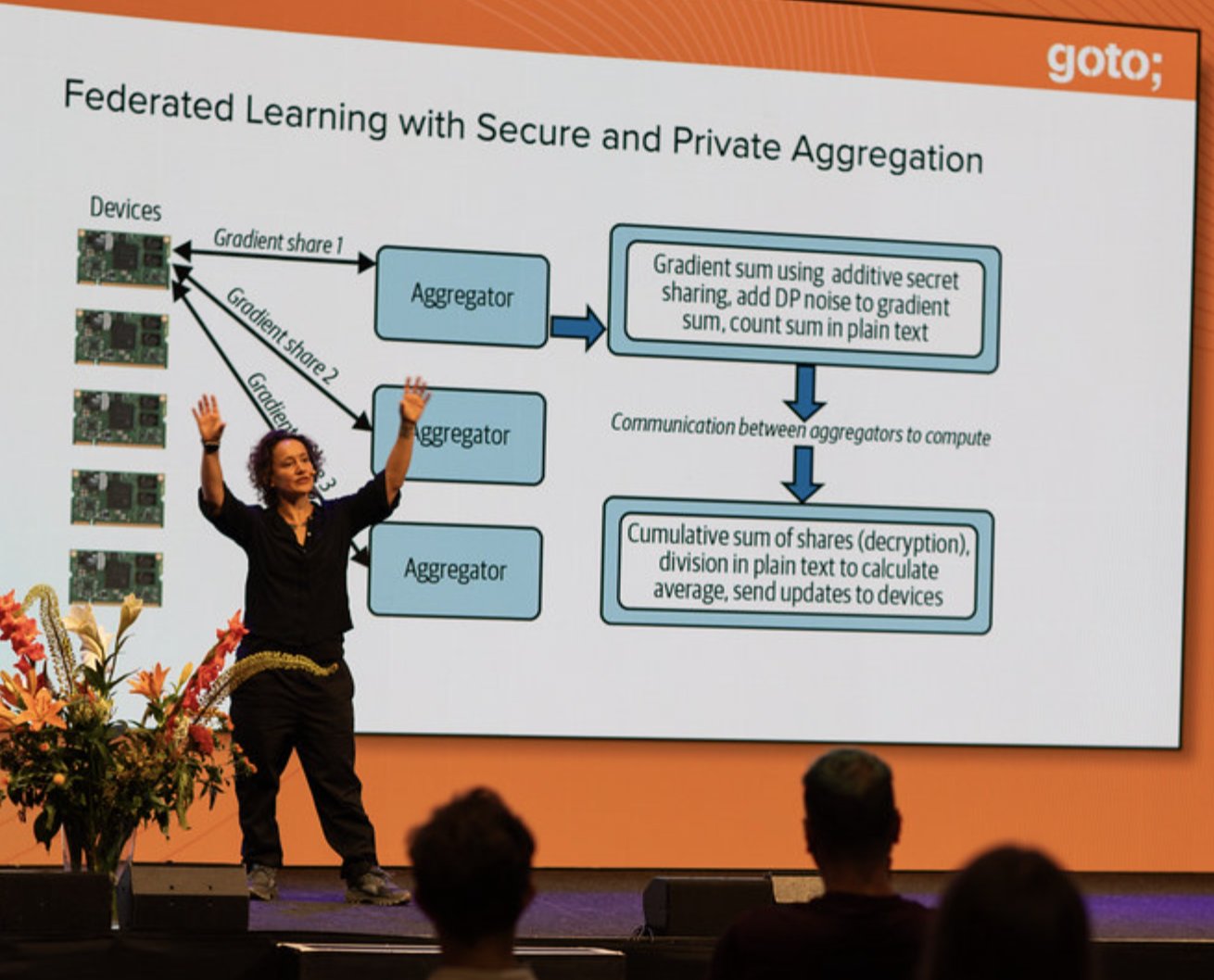

Katharine on Practical Data Privacy at GOTO Amsterdam 2023

Katharine on Practical Data Privacy at GOTO Amsterdam 2023

I've been considering these questions long before LLMs came on the scene. My book Practical Data Privacy (O'Reilly 2023) is one of the most recommended introductions to privacy controls in data, AI and ML workflows.

The Practical AI Privacy masterclass is my 2026 update for that book. The course focuses on inference instead of training and information-heavy workloads, like LLMs, diffusion models and advanced setups like agentic workflows. It aims to give AI model users (not model developers) the ability to better understand and control their privacy and security.

By creating a safe place to experiment and learn about AI privacy risks and controls, you'll learn real skills you can use both at work and in your own personal AI usage. You'll leave the course able to assess, evaluate and address privacy risks presented by large models and AI workflows. You'll have code you wrote, analyzed and tested at your fingertips, for work and personal projects.

Who is the class for? Data, Software and AI Engineering, but not only

Since the course is quite hands-on, the target audience is someone who is comfortable reading and writing some code and who wants to build out privacy engineering in AI/ML workflows. I chose this style because I think there's an immediate need for people to build these tasks at work, and want to create a safe environment where you can practice and learn.

Want a taste of what we'll cover and how I teach? Check out my Maven Lightning Lesson on why privacy is such a hard problem to solve in AI systems and my Probably Private YouTube mini-course on security.

If you're not an engineer it doesn't necessarily mean the course isn't for you. If you wear a hat helping shape policy and architecture (like privacy analysts, privacy and security architects) or if you are a privacy professional or product owner, I think your expertise will help drive different aspects of how privacy fits into AI systems.

Multidisciplinary teams are essential to any successful privacy program because it's often non-engineering roles that drive policy and process at an organization.

If you want to join the course but are intimidated by coding, know that there will be ways to partner and team up with others; as well as ways to contribute your knowledge as part of the larger conversations as to how we build these systems.

What You'll Learn and Why

I've broken down the main concepts you'll learn with some details on what the concept is and why it's important. Several concepts build on previous ones, so I've tried to keep the concepts in general order that they will be taught in.

| What you'll learn | Details |

|---|---|

| Getting Started with Local AI | Create a safe experimentation environment for testing new ideas. |

| Architecting an AI product workflow | Practice architecture decisions, learn how AI products work |

| Generating synthetic data and initial evaluations | Evaluate synthetic data and generic evals as building block for privacy evaluations |

| Privacy attacks on AI systems | Learn how to run attacks focused on extracting confidential or sensitive information |

| Reviewing and changing architectures | Based on what you've learned, re-evaluate your architecture choices and make new designs |

| Evaluating basic protections (permissions, pseudonymization, input sanitization) | Determine when and how basic protections address the privacy and security issues exposed |

| Guardrails | Learn what guardrails can and cannot do and get hands-on practice using them in your setup |

| Advanced protections (other than guardrails): Prompt minimization, routing and local options | Experiment with more advanced protections for privacy and update your architectures accordingly |

| Privacy testing and evaluations | Build evaluation and testing suites around your use case, tuned to the privacy requirements |

| Privacy monitoring and observability | Establish observability and monitoring best practices with privacy as the focus |

All of this will happen in hands-on labs with extra office hours for questions, deeper dives and experimentation. The course will have two main lessons per week along with time for questions. There will also be some at-home assignments, team projects and asynchronous conversations (via the Maven platform and Mattermost).

Feedback, Questions, Ideas very welcome!

I'd be happy to answer any questions you have. I'll try to keep this post updated as questions emerge to ensure the course is clear.

If you have feedback on the topics, or wish I covered something you expected to see, feel free to write me. You can email me or reach out on LinkedIn.